Geometry Shader

One day, I was thinking about how one would get a “huge hexagon map” to work in Unity3D (might it be defined by height maps or a procedual seed). Furthermore, the tiles there (actually, their type = height and texture) should be changeable dynamically during gameplay (like, “minecraft’ish” or “Timber-and-Stone’ish”).

One common (from what I read) approach is to split up the map into “map chunks” and – at runtime – create and show only those chunk objects (meshes) next to the player. As the player’s position changes, delete “far” meshes and create/show new ones nearby (get appropriate info from e.g. a heightmap).

By using some supporting concepts, I found that this does work quite well (e.g. a background thread creates the map chunk data in question, then passes it over to the main Unity thread, which in turn creates and positions the actual GameObject).

Due to the “atomic nature” of creating game objects (can not be split up between OnUpdate cycles), the map chunks need to be considerably small (let say 8×8 hex tiles). This ensures stable fps (no “hickup/stuttering” when shoveling vertex data from Unity script over to the gfx card), but – naturally – results in a quite large amount of map chunk objects (given I don’t want to “fade out view” after a short distance by using e.g. fog).

A different concept would be to (partly) move work on the map chunk creation and adaption from CPU to GPU – by shaders. With Unity v4, geometry shaders are available now (needs Direct3D11; Windows Vista and up. Not sure about non-Windows systems). Those can create (emit) vertices and faces on their own, without them being initially present (e.g. when creating a mesh programmatically).

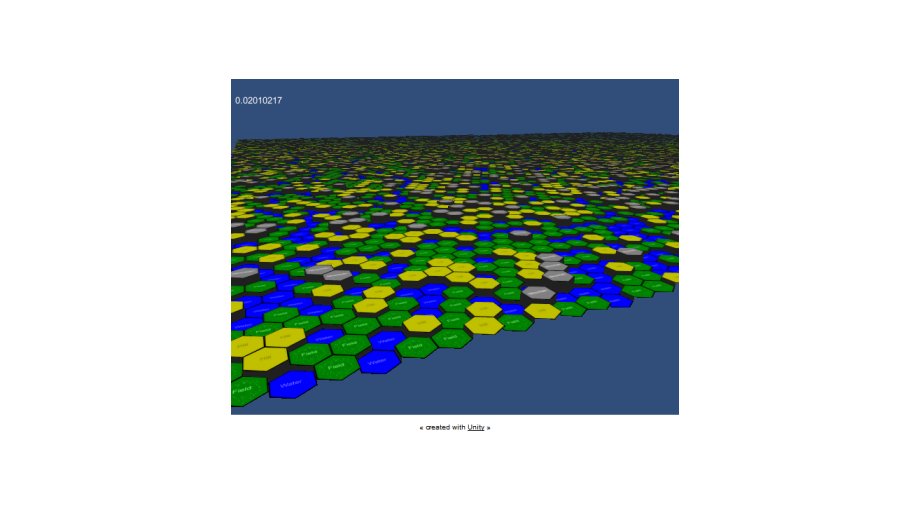

The test app below uses DX11 geometry shaders in Unity for creating (additional) vertices and triangles on-the-fly. By changing a heightmap texture, the app can now easily (and fast) change terrain characteristics. The complete project including shader code is available for download (many thanks to the people commenting on my thread on the unity forums).

Test Case

First (and one-time only), a flat mesh is created programmatically. It consists of a plane with one(!) vertex at exactly the center position of each heaxgon tile that will be shown later (in the test app, this is 128×128 grid size).

Each vertex gets its indices (X,Z) in the hextile grid encoded in the r- and g- components of its color. By doing so, this information can be transfered to and used by the shader code. The shader gets a heightmap and a texture assigned (different colors for the different terrain types/heights).

The shader now creates a hex tile (emits additional vertices and triangles) out of each vertex input information (= hex tile center position). Then, it does a sampling of the given heightmap and adjusts vertices’ heights and UVs to the required tile type. Also, it adds “side walls” if there are adjacent hex tiles with different heights.

By just switching the texture (heightmap) of the shader (or changing single pixels), the terrain layout can now be changed (in the demo, it is done per OnUpdate by applying random noise).

Downloads

| File | Date | Size | Remarks |

| 20121216_ShaderTest_Distribute.zip | 12/16/2012 | 176 KB | Unity3D v4 Geometry Shader Test. Complete Unity 4 project, zipped. |